|

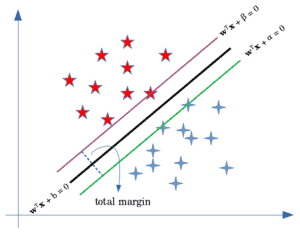

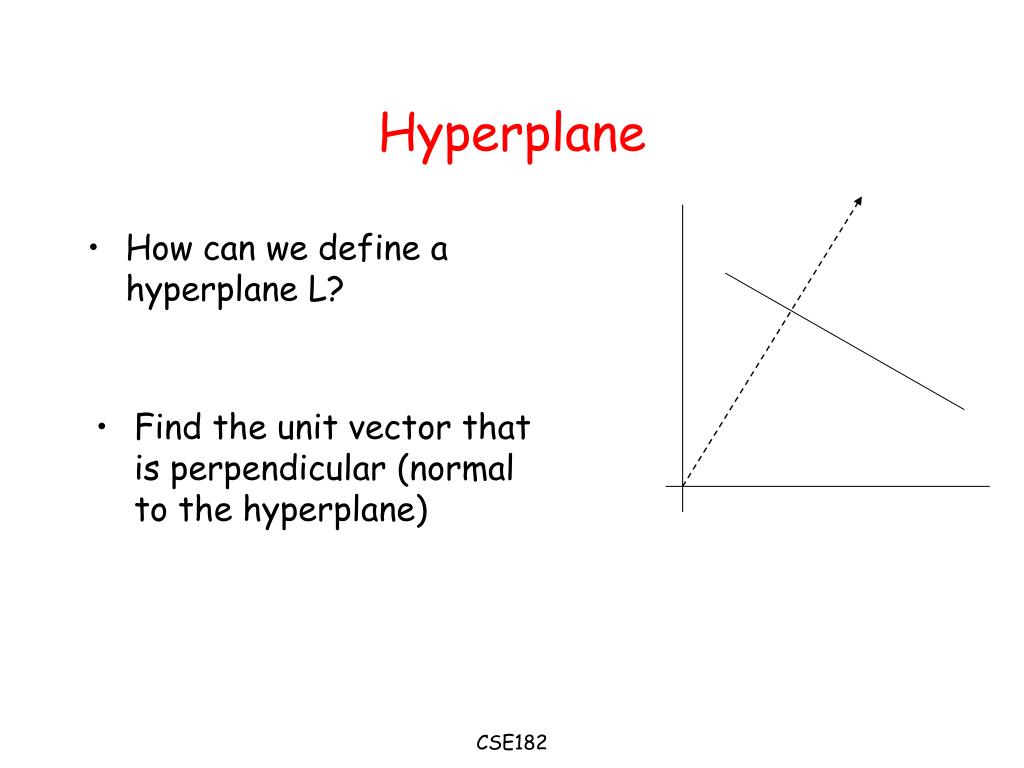

I am not saying these topics aren’t important, but they are more important if you are planning to do research in this area. You must have heard about the primal formulation, dual formulation, Lagranges multiplier etc. Here we will understand only that part that is required in implementing this algorithm. If you are planning to do research, then this might not be the right place for you. SVM is a broad topic and people are still doing research on this algorithm. Here in this section, we’ll try to understand each and every step working under the hood. Many people skip the math intuition behind this algorithm because it is pretty hard to digest. Image Source: Author Mathematical Intuition behind Support Vector Machine I will talk more about these two in the later section. There are two types of margins hard margin and soft margin. In SVM large margin is considered a good margin. Margin: it is the distance between the hyperplane and the observations closest to the hyperplane (support vectors). A separating line will be defined with the help of these data points. Support Vectors: These are the points that are closest to the hyperplane. Now let’s define two main terms which will be repeated again and again in this article: In most real-world applications we do not find linearly separable datapoints hence we use kernel trick to solve them. When the data is not linearly separable then we can use Non-Linear SVM, which means when the data points cannot be separated into 2 classes by using a straight line (if 2D) then we use some advanced techniques like kernel tricks to classify them. Perfectly linearly separable means that the data points can be classified into 2 classes by using a single straight line(if 2D). When the data is perfectly linearly separable only then we can use Linear SVM. Types of Support Vector Machine Linear SVM

Logistic regression and SVM without any kernel have similar performance but depending on your features, one may be more efficient than the other.

It is usually advisable to first use logistic regression and see how does it performs, if it fails to give a good accuracy you can go for SVM without any kernel (will talk more about kernels in the later section). SVM works best when the dataset is small and complex. When to use logistic regression vs Support vector machine?ĭepending on the number of features you have you can either choose Logistic Regression or SVM. Well, SVM does this by finding the maximum margin between the hyperplanes that means maximum distances between the two classes. Now the question is which hyperplane does it select? There can be an infinite number of hyperplanes passing through a point and classifying the two classes perfectly. Both the algorithms try to find the best hyperplane, but the main difference is logistic regression is a probabilistic approach whereas support vector machine is based on statistical approaches. Note: Don’t get confused between SVM and logistic regression. It is a supervised machine learning problem where we try to find a hyperplane that best separates the two classes.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed